Introduction — framing autonomous systems and scope

Autonomous Systems represent a paradigm shift in engineering and computer science, defining machines capable of operating in complex, dynamic environments without direct human intervention. These systems perceive their surroundings, make decisions, and execute actions to achieve specified goals. From self-driving vehicles and unmanned aerial drones to sophisticated robotic manufacturing and planetary rovers, the application space for autonomous systems is vast and rapidly expanding. This technical guide serves as a comprehensive resource for researchers, engineers, and decision-makers, providing a deep dive into the core components, architectures, learning methodologies, and deployment challenges that define the field today. We aim to bridge the gap between theoretical concepts and practical implementation, offering a roadmap for building robust, safe, and effective autonomous solutions.

Core components overview

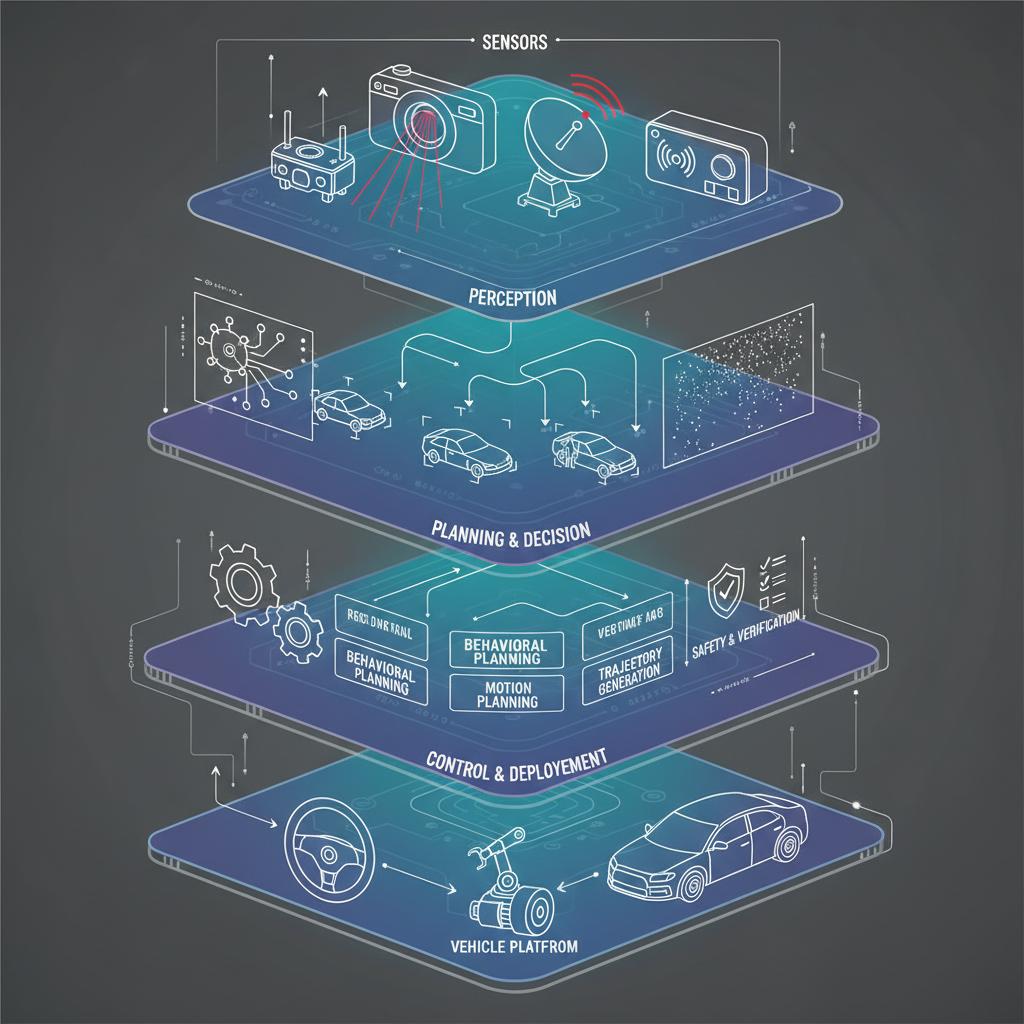

At the heart of all autonomous systems lies a fundamental processing loop: perceive, plan, and act. This cycle dictates how an agent interacts with its environment. Each stage involves a sophisticated interplay of hardware and software components working in concert to enable intelligent behavior. Understanding these building blocks is the first step toward designing and analyzing complex autonomous agents.

Perception and sensor fusion

The perception stack is the sensory interface of an autonomous system, responsible for interpreting raw data from the environment. This is achieved through a suite of sensors, each with unique strengths and weaknesses.

- Cameras (Vision): Provide rich, dense color and texture information, excellent for object classification and scene understanding.

- LiDAR (Light Detection and Ranging): Generates precise 3D point clouds, offering accurate distance measurements and object geometry.

- RADAR (Radio Detection and Ranging): Resilient to adverse weather conditions (rain, fog) and effective at measuring object velocity via the Doppler effect.

- Inertial Measurement Units (IMUs): Measure orientation, angular rates, and linear acceleration, providing critical data for motion estimation.

No single sensor is sufficient. Sensor fusion is the process of combining data from multiple sensors to create a more accurate, complete, and reliable representation of the environment than any individual sensor could provide. Techniques like the Kalman Filter and its variants (Extended Kalman Filter, Unscented Kalman Filter) are commonly used to fuse noisy and asynchronous sensor data into a coherent world model.

State estimation and localization

An autonomous system must know its own state—its position, orientation, and velocity—relative to the world. State estimation is the process of computing this information. Localization is the specific task of determining the system’s position on a map. A common and powerful technique is Simultaneous Localization and Mapping (SLAM), where the system builds a map of an unknown environment while simultaneously keeping track of its location within it. This is crucial for GPS-denied environments like indoor warehouses or underwater exploration. Probabilistic methods are central to this process, allowing the system to manage uncertainty in its sensor readings and motion models.

Planning and decision-making

Once the system understands its environment and its own state, it must decide what to do next. The planning module is responsible for generating a sequence of actions to achieve a goal. This is often broken down into a hierarchy:

- Mission Planning: High-level goal setting (e.g., “drive from point A to point B”).

- Behavioral Planning: Making tactical decisions based on rules and context (e.g., “change lanes,” “yield to a pedestrian”).

- Motion Planning: Generating a precise, collision-free trajectory for the actuators to follow (e.g., a specific path for the wheels to trace).

Algorithms like A* (A-star) and Rapidly-exploring Random Trees (RRTs) are widely used for pathfinding, while decision-making under uncertainty is often modeled using frameworks like Partially Observable Markov Decision Processes (POMDPs).

Control and actuation

The final step is to translate the planned trajectory into physical action. The control system calculates the necessary commands for the actuators (e.g., motors, servos, steering racks) to execute the desired motion. Proportional-Integral-Derivative (PID) controllers are a classic and effective method for many applications. For more complex systems with multiple constraints, Model Predictive Control (MPC) is often employed, as it can optimize control inputs over a future time horizon while respecting system limits.

Architectures and software frameworks

The performance and reliability of autonomous systems depend heavily on their underlying software architecture. A well-designed architecture facilitates development, testing, and maintenance while ensuring robustness and fault tolerance.

Middleware and orchestration patterns

Middleware provides a standardized communication layer that allows different software modules to exchange data seamlessly. The Robot Operating System (ROS) is the de facto standard in robotics research and a growing force in commercial applications. It uses a publish-subscribe (pub/sub) pattern, where nodes (processes) can publish messages to topics or subscribe to topics to receive data. This decouples components, allowing them to be developed and tested independently. For large-scale deployments, containerization technologies like Docker and orchestration platforms like Kubernetes are becoming essential for managing distributed software across a fleet of autonomous agents.

Modularity and redundancy strategies

Modularity is the practice of designing a system as a set of independent, interchangeable modules. This simplifies debugging, enables parallel development, and allows for easier upgrades. For safety-critical autonomous systems, redundancy is non-negotiable. This can be implemented at multiple levels:

- Hardware Redundancy: Using multiple sensors (e.g., cameras and LiDAR) or redundant compute units.

- Software Redundancy: Running multiple, diverse algorithms for the same task (e.g., two different perception models) and using a voter to determine the final output.

- Algorithmic Redundancy: Having fallback behaviors or safe-stop maneuvers that engage if a primary component fails.

Learning approaches in autonomous systems

Machine learning has revolutionized the capabilities of modern autonomous systems, particularly in perception and decision-making, where hand-crafting rules is infeasible.

Neural networks for perception and prediction

Deep neural networks are the state-of-the-art for many perception tasks. Convolutional Neural Networks (CNNs) excel at processing grid-like data such as images, making them ideal for object detection, segmentation, and classification. For tasks involving time-series data, such as predicting the future trajectories of other agents, Recurrent Neural Networks (RNNs) and their variants like Long Short-Term Memory (LSTM) units are highly effective.

Reinforcement learning for sequential decision-making

Reinforcement Learning (RL) is a powerful paradigm for training agents to make optimal sequences of decisions. An RL agent learns a “policy”—a mapping from states to actions—by interacting with an environment and receiving rewards or penalties. This is particularly useful for complex control problems like robotic manipulation, gait generation for legged robots, or optimizing driving behavior. Challenges remain in sample efficiency and ensuring safe exploration, but RL holds immense promise for developing highly adaptive behaviors.

Simulation to reality transfer techniques

Training autonomous systems in the real world can be expensive, time-consuming, and dangerous. Simulation offers a safe, scalable alternative. However, a gap often exists between the simulated environment and reality (the “sim-to-real” gap). To bridge this, engineers use techniques like domain randomization, where physical parameters in the simulation (e.g., friction, lighting, mass) are varied during training. This forces the learning algorithm to develop a policy that is robust and can generalize to the real world.

Safety, verification, and validation

Ensuring the safety of autonomous systems is the most critical challenge to their widespread adoption. A multi-faceted approach combining formal methods, rigorous testing, and clear metrics is required.

Formal methods and runtime assurance

Formal methods are mathematically-based techniques used to prove that a system’s design satisfies certain safety properties (e.g., “the vehicle will never run a red light”). While computationally intensive, they provide the highest level of assurance. Runtime assurance is a complementary approach where a simple, verifiable safety monitor runs alongside the primary complex system (e.g., an AI-based controller). If the primary system attempts an unsafe action, the monitor intervenes and executes a pre-proven safe maneuver.

Testing regimes and metrics

Validation cannot be achieved through a single method. A comprehensive testing strategy includes:

- Simulation Testing: Large-scale testing of logic and algorithms in a virtual environment, including a focus on rare “edge cases.”

- Hardware-in-the-Loop (HIL) Testing: Connecting the actual system computer to a simulator that feeds it realistic sensor data.

- Closed-Course Testing: Operating the physical system in a controlled, real-world environment.

- Public Road/Deployment Testing: The final stage of validation under real-world conditions, often with a safety operator.

Key metrics include disengagement rates (how often a human must take over), mean time between failures (MTBF), and scenario-based success rates.

Human interaction and ethical considerations

Even fully autonomous systems will operate in a world populated by humans. Designing for effective and safe human-robot interaction is essential.

Explainability and human oversight

For a human to trust and effectively supervise an autonomous system, its decisions must be understandable. Explainable AI (XAI) is an area of research focused on developing models that can provide clear reasoning for their outputs. This is crucial for debugging, certification, and enabling human operators to make informed decisions when they need to intervene.

Policy and governance implications

The deployment of autonomous systems raises significant societal questions regarding liability, data privacy, and workforce impact. Developing clear policy and governance is a collaborative effort involving engineers, ethicists, policymakers, and the public. Standards organizations like the International Organization for Standardization (ISO) and professional bodies like the Institute of Electrical and Electronics Engineers (IEEE) play a vital role in creating the technical standards that form the foundation for regulation.

Deployment challenges and real-world case studies

Moving from a research prototype to a production-grade autonomous system introduces a host of new challenges related to reliability, scalability, and long-term operation.

Performance monitoring and maintenance

Once deployed, a fleet of autonomous systems requires continuous monitoring to detect performance degradation or component failure. A robust data pipeline is necessary to log events, anomalies, and sensor data from the field. This data is invaluable for “fleet learning,” where insights from all deployed units are used to train improved models that can be pushed out via over-the-air (OTA) updates, creating a continuous improvement cycle.

Scaling and operational constraints

Operating at scale means dealing with real-world constraints. Edge computing becomes critical, as processing must be done locally on the agent to minimize latency, rather than in the cloud. This places constraints on the computational complexity of algorithms. Power consumption, thermal management, and component durability are all critical engineering considerations that determine the operational viability and total cost of ownership of an autonomous solution.

Future directions and research roadmap

The field of autonomous systems is evolving at an incredible pace. Looking forward, several key research areas will define the next generation of autonomous capabilities. Multi-agent systems, where swarms of robots collaborate to perform tasks, promise new efficiencies in logistics and environmental monitoring. The quest for more generalizable AI that can adapt to entirely new situations with minimal retraining remains a central goal. Strategic roadmaps for 2026 and beyond will increasingly focus on developing scalable verification and validation techniques to certify these more complex and adaptive systems, ensuring they can be deployed safely and reliably in society.

Resources, glossary, and reproducible experiments appendix

Continuing your journey into autonomous systems requires access to quality information and tools. Below is a curated list of resources, a glossary of key terms, and a note on reproducibility.

- Community and Knowledge Bases:

- ArXiv: The premier open-access repository for pre-print research papers in machine learning and robotics.

- Autonomous Systems Overview: A high-level introduction to the core concepts from Wikipedia.

- Frameworks and Standards:

- Robot Operating System (ROS): An open-source set of software libraries and tools for building robot applications.

- ISO Safety Standards: The official source for international standards related to safety and dependability.

- IEEE Resources: A hub for publications, standards, and professional communities in engineering and computing.

Glossary:

| Term | Definition |

|---|---|

| SLAM | Simultaneous Localization and Mapping: The process of building a map and localizing within it at the same time. |

| Sensor Fusion | Combining data from multiple sensors to produce more accurate and reliable information. |

| PID Controller | Proportional-Integral-Derivative Controller: A common control loop feedback mechanism. |

| Reinforcement Learning (RL) | A machine learning paradigm where an agent learns to make decisions by taking actions and receiving rewards. |

| XAI | Explainable AI: Artificial intelligence models designed to provide human-understandable justifications for their decisions. |

Reproducible Experiments Appendix: A cornerstone of scientific and engineering progress is reproducibility. When publishing or evaluating work in autonomous systems, a reproducible experiment should include, at a minimum: open-sourced code, clear documentation of the system’s hardware and software architecture, the datasets used for training and testing, and the specific metrics and evaluation protocols employed.