A Technical Whitepaper on Securing AI Systems

Table of Contents

- Introduction: Why AI Requires Distinct Security Approaches

- Overview of Common Threat Classes to AI

- Threat Modeling for Machine Learning Pipelines

- Protecting Training and Production Data

- Model Hardening and Robustness Techniques

- Secure Training Practices and Validation Protocols

- Runtime Defenses and Monitoring Strategies

- Identity, Access Control and Supply Chain Considerations

- Adversarial Testing and Red Team Exercises

- Governance, Audit Trails and Explainability Controls

- Incident Response Tailored to AI Failures

- Operational Checklist and Implementation Roadmap

- Appendix: Templates, Sample Threat Models and Test Cases

- Further Reading and Standards

Introduction: Why AI Requires Distinct Security Approaches

As organizations increasingly integrate machine learning (ML) and artificial intelligence (AI) into critical systems, the need for robust Artificial Intelligence Security has become paramount. Traditional cybersecurity measures, designed to protect infrastructure, networks, and applications, are insufficient to address the unique vulnerabilities inherent in AI models and their data pipelines. Unlike static software, AI systems are dynamic, learning from data and evolving over time, which introduces a new and complex attack surface.

The Shifting Security Paradigm

The security of AI is not just about protecting the code or the server it runs on; it is about ensuring the integrity, confidentiality, and availability of the entire machine learning lifecycle. This includes the data used for training, the algorithmic model itself, and the predictions it generates in production. A compromise in any of these areas can lead to significant consequences, from erroneous business decisions and financial loss to privacy violations and physical safety risks. Effective Artificial Intelligence Security requires a multi-layered approach that secures the AI system from its inception through deployment and ongoing operation.

Why Standard Cybersecurity Isn’t Enough

Standard security practices focus on vulnerabilities like buffer overflows, SQL injection, or misconfigured access controls. While these are still relevant, AI systems are susceptible to novel threats that exploit the learning process itself. For example, an attacker can manipulate training data to create a hidden backdoor in a model or craft subtle, imperceptible inputs to fool a production system. These attacks do not crash servers or trigger conventional intrusion detection systems; they manipulate the logic of the model itself. This necessitates a specialized focus on AI security that blends data science, software engineering, and security expertise.

Overview of Common Threat Classes to AI

Understanding the threat landscape is the first step toward building a resilient Artificial Intelligence Security posture. Major adversarial attacks against AI systems can be categorized into several distinct classes.

Evasion Attacks

Also known as adversarial examples, these attacks occur at inference time. The attacker makes small, carefully crafted perturbations to an input to cause the model to misclassify it. For instance, a minor modification to an image, invisible to the human eye, could cause an image recognition system to mistake a stop sign for a speed limit sign.

Data Poisoning Attacks

These attacks target the training phase. An adversary injects malicious or mislabeled data into the training set. This can corrupt the learning process, causing the model to learn incorrect patterns, degrade its overall performance, or even create a specific backdoor that the attacker can later exploit.

Model Inversion and Extraction

These are privacy-centric attacks. Model inversion allows an attacker to reconstruct sensitive training data by repeatedly querying the model. Model extraction (or model stealing) involves an attacker creating a functionally equivalent copy of a proprietary model by observing its input-output behavior, thereby stealing valuable intellectual property.

Trojaning and Backdoors

Similar to data poisoning, a trojan attack embeds a hidden trigger into the model during training. The model performs normally on most inputs but behaves in a specific, malicious way when it encounters an input containing the attacker’s secret trigger (e.g., a specific logo in an image or a particular phrase in a text).

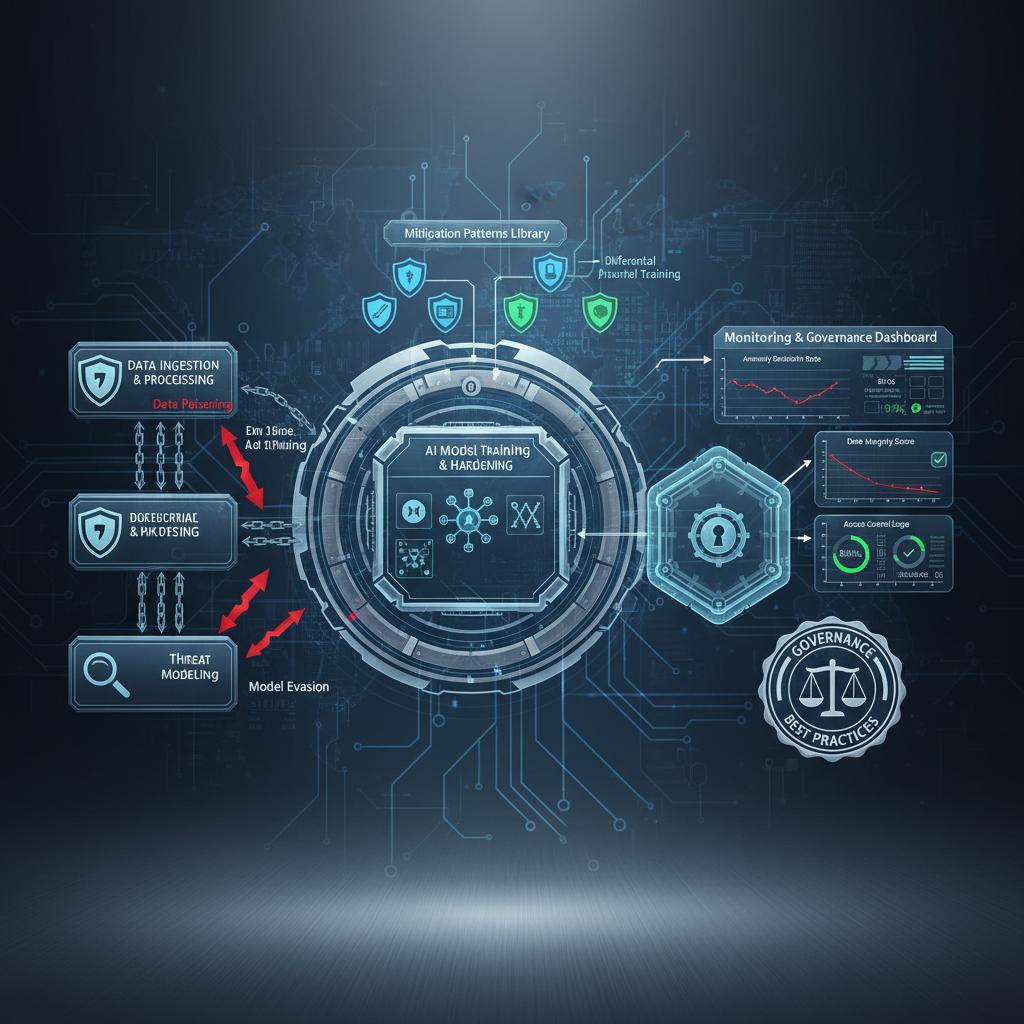

Threat Modeling for Machine Learning Pipelines

A systematic approach to identifying and mitigating risks is essential. Threat modeling for AI extends traditional software security frameworks to cover the entire ML pipeline, from data ingestion to model deployment.

Applying STRIDE to AI

The STRIDE framework (Spoofing, Tampering, Repudiation, Information Disclosure, Denial of Service, Elevation of Privilege) can be adapted for Artificial Intelligence Security:

- Spoofing: Faking model inputs with adversarial examples.

- Tampering: Manipulating training data (poisoning) or a deployed model file.

- Repudiation: A user denying they submitted a query that led to a harmful model output.

- Information Disclosure: Extracting sensitive training data or the model architecture itself.

- Denial of Service: Overloading a model with computationally expensive queries to exhaust resources.

- Elevation of Privilege: Exploiting a model’s interaction with other systems to gain unauthorized access.

Identifying Critical Assets

The primary assets in an AI system differ from traditional applications. Your threat model should prioritize protecting:

- Training Data: The foundation of the model’s knowledge.

- The Trained Model: The core intellectual property and operational component.

- The Prediction/Inference API: The public-facing interface to the model’s logic.

- Feature Extraction Logic: The proprietary methods used to process raw data.

Protecting Training and Production Data

Since data is the lifeblood of AI, its protection is a cornerstone of AI security. This extends beyond standard encryption and access control.

Data Integrity and Confidentiality

Implement strong access controls to data stores and use data hashing and versioning to ensure that training data is not tampered with. Use secure, audited data pipelines to move data between storage, preprocessing, and training environments. For confidential data, techniques like homomorphic encryption or secure multi-party computation can allow for model training on encrypted data, though they come with significant performance overhead.

Differential Privacy

Differential privacy is a formal, mathematical framework for quantifying privacy leakage. It involves adding carefully calibrated statistical noise to data or query results. This makes it difficult for an attacker to determine whether any single individual’s data was included in the training set, thus protecting against model inversion and membership inference attacks.

Model Hardening and Robustness Techniques

Model hardening techniques aim to make AI models more resilient to adversarial attacks, particularly evasion attacks.

Adversarial Training

This is one of the most effective defenses against evasion attacks. It involves augmenting the training data with adversarial examples. By exposing the model to these crafted inputs during training and teaching it the correct labels, the model learns to be more robust and less sensitive to small perturbations.

Defensive Distillation

This technique involves training a second “student” model on the softened probability outputs of an initial “teacher” model. This process can smooth the model’s decision boundaries, making it more difficult for an attacker to find the small gradients needed to craft adversarial examples.

Pruning and Quantization

While often used for performance optimization, model pruning (removing unnecessary neurons) and quantization (reducing the precision of model weights) can also have a positive security effect. These techniques can sometimes remove the subtle, high-frequency signals that attackers exploit to create adversarial examples.

Secure Training Practices and Validation Protocols

The training process itself is a critical control point for Artificial Intelligence Security. A compromised training environment can invalidate all other security controls.

Secure Environments

The infrastructure used for model training should be treated as a high-security environment. Access should be tightly controlled, logged, and monitored. Isolate training jobs from other network services to prevent lateral movement in case of a breach.

Model Validation and Provenance

Before deploying a model, it must undergo rigorous validation against a held-out test set to ensure performance and accuracy. Furthermore, maintain a clear chain of custody for both data and models. Model provenance involves tracking which dataset, code version, and hyperparameters were used to create a specific model version, which is crucial for auditing and incident response.

Runtime Defenses and Monitoring Strategies

Once a model is deployed, it requires continuous monitoring and protection against real-time threats.

Input Sanitization and Validation

Just as web applications sanitize user input to prevent injection attacks, AI systems should validate incoming data. This can involve checking data types, ranges, or formats. For more advanced threats, techniques like feature squeezing (reducing the color depth of an image, for example) can be used to disrupt adversarial perturbations before they reach the model.

Anomaly Detection

Monitor the inputs sent to the model and the predictions it generates. Anomaly detection systems can flag inputs that are statistically different from the training distribution, which may indicate an evasion attempt. Similarly, monitoring for concept drift—a change in the statistical properties of the model’s outputs—can signal a potential data poisoning attack or a change in the real-world environment.

Identity, Access Control and Supply Chain Considerations

A comprehensive Artificial Intelligence Security strategy must include robust identity and access management (IAM) and address supply chain risks.

Principle of Least Privilege

Apply the principle of least privilege throughout the MLOps pipeline. Developers should not have access to production data, and deployment services should not have permissions to modify training code. Use role-based access control (RBAC) to manage permissions for data scientists, ML engineers, and operations staff.

Securing the MLOps Supply Chain

Modern AI systems are built on a complex supply chain of open-source libraries, pre-trained models, and third-party data sources. Each component introduces potential risk.

- Dependency Scanning: Regularly scan libraries like TensorFlow, PyTorch, and scikit-learn for known vulnerabilities.

- Pre-trained Model Vetting: Only use pre-trained models from trusted, reputable sources. Whenever possible, fine-tune them in a sandboxed environment and scan them for embedded trojans.

- Data Source Verification: Ensure the integrity and authenticity of data from third-party suppliers.

Adversarial Testing and Red Team Exercises

Proactive testing is essential to uncover vulnerabilities before they can be exploited. This goes beyond standard quality assurance testing.

Simulating Real-World Attacks

An AI red team should be tasked with actively trying to break the model. This involves generating adversarial examples, attempting data poisoning on a staging version of the training pipeline, and running model extraction queries against the production API. The goal is to test the effectiveness of implemented defenses and identify blind spots.

Frameworks for Testing

Tools and frameworks can aid in this process. By leveraging resources like the MITRE ATLAS Adversarial Threat Library, teams can structure their tests based on known adversary tactics and techniques, ensuring comprehensive coverage of the AI threat landscape.

Governance, Audit Trails and Explainability Controls

Strong governance provides the framework for a sustainable AI security program.

Establishing an AI Security Policy

Develop a formal policy that defines the security requirements for any AI system developed or procured by the organization. This policy should be integrated with broader risk management frameworks, such as the NIST AI Risk Management Framework. It should specify requirements for threat modeling, data handling, model validation, and incident response.

The Role of Explainable AI (XAI)

Explainable AI (XAI) techniques, which aim to make model decisions more transparent, are also a valuable security tool. By understanding *why* a model made a particular prediction, security analysts can more easily identify anomalous or malicious behavior. If a model’s prediction is based on nonsensical features, it could be a sign of an adversarial attack or a data poisoning issue.

Incident Response Tailored to AI Failures

An AI-specific incident response plan is crucial for reacting quickly and effectively when a model is compromised.

Unique Indicators of Compromise

Indicators for an AI incident differ from traditional ones. They might include:

- A sudden drop in model accuracy for a specific class of inputs.

- An unusual pattern of queries from a single source.

- A model generating outputs with abnormally low confidence scores.

- Detection of statistically significant data or concept drift.

Containment and Recovery

The response plan should include steps to contain the damage, such as taking a model offline, reverting to a previously known-good version, or triggering a retraining pipeline with sanitized data. Post-incident analysis is vital to understand the root cause and update defenses to prevent recurrence.

Operational Checklist and Implementation Roadmap

Implementing a comprehensive Artificial Intelligence Security program requires a phased approach. The following roadmap provides a high-level guide for implementation strategies from 2026 onward.

Phased Implementation for 2026 and Beyond

- Phase 1: Foundational Security (First 6 Months)

- Establish an AI governance committee and security policy.

- Mandate threat modeling for all new AI projects.

- Implement robust IAM controls for the entire MLOps pipeline.

- Begin scanning all AI-related software dependencies for vulnerabilities.

- Phase 2: Proactive Defense (Next 12 Months)

- Integrate adversarial testing and red teaming into the pre-deployment phase.

- Implement basic model hardening techniques like adversarial training for critical models.

- Deploy runtime monitoring and anomaly detection for production models.

- Establish secure data provenance and model versioning practices.

- Phase 3: Advanced Resilience (Ongoing)

- Explore and pilot advanced privacy-enhancing technologies like differential privacy.

- Develop a formal, tested incident response plan specifically for AI systems.

- Integrate XAI tools for enhanced security monitoring and auditing.

- Continuously update defenses based on emerging threats and research.

Appendix: Templates, Sample Threat Models and Test Cases

While full templates are beyond the scope of this document, a mature Artificial Intelligence Security program should develop standardized assets to guide implementation.

Sample Threat Model Questions

- Who can contribute data to our training set? How do we verify its integrity?

- Where is the trained model stored? Who has access to modify or exfiltrate it?

- Could an attacker infer sensitive information by repeatedly querying our public API?

- What third-party libraries or pre-trained models are we using? What are their known vulnerabilities?

Adversarial Test Case Examples

- Evasion: Generate 100 adversarial images using the Fast Gradient Sign Method (FGSM) and measure the model’s accuracy drop.

- Poisoning: In a staging environment, inject 1% of mislabeled data into the training set and evaluate if a specific backdoor is created.

- Extraction: Using a synthetic dataset, query the production model’s API and attempt to train a copycat model. Measure the copied model’s accuracy against the original.

Further Reading and Standards

The field of Artificial Intelligence Security is rapidly evolving. Staying informed through community projects and official standards is crucial for maintaining a strong security posture. The following resources provide valuable guidance and frameworks:

- OWASP Machine Learning Security Project: A community-led project that identifies and provides guidance on the top 10 most critical security risks to machine learning systems.

- NIST AI Risk Management Framework: A voluntary framework to help organizations manage the risks associated with AI, providing a structured approach to identifying, assessing, and responding to AI-related vulnerabilities.

- MITRE ATLAS Adversarial Threat Library: A knowledge base of adversary tactics, techniques, and case studies for AI systems, modeled after the widely used ATT&CK framework.

- ISO AI Standards Committee (ISO/IEC JTC 1/SC 42): The international committee responsible for developing standards for AI, including those related to security, trustworthiness, and risk management.